What AI Needs From Your Organization Before It Can Help

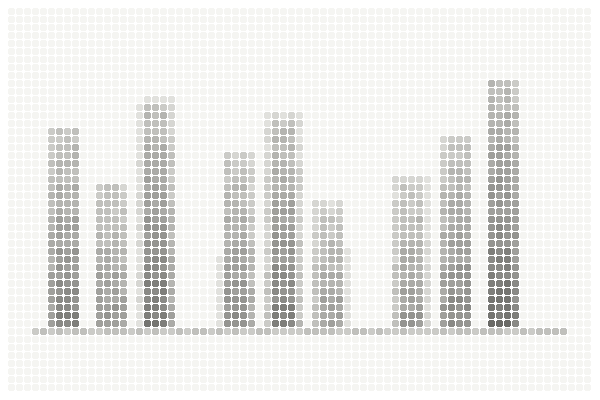

The four organizational foundations AI requires before it can deliver value. None of them are technical.

The conversation about AI is almost entirely about what AI can do for organizations. This article is about the other side of that equation: what organizations need to provide before AI can deliver on its promise. The prerequisites are not technical. They are organizational. And they are consistently the difference between AI investments that work and those that do not.

A CEO returning from a conference recently summarized the current state of the AI conversation with remarkable precision: "Everyone is talking about what AI can do. Nobody is talking about what it needs from us before it can do anything."

She was right. The discourse is overwhelmingly weighted toward capability — what the models can do, what the tools can automate, what the future holds. This is exciting and legitimate. The capability is real and growing rapidly.

But there is a gap between what AI can do in an ideal environment and what it can do inside a specific company with specific data, specific processes, specific people, and specific constraints. That gap is not a technology gap. It is a readiness gap. And readiness is defined not by the sophistication of your technology infrastructure but by the state of four organizational foundations that are entirely within your control.

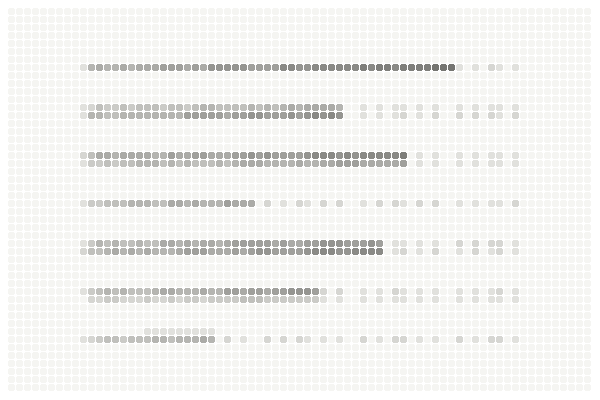

Foundation 1: Documented Processes

AI automates or augments processes. It cannot work with a process it does not understand. And the only way it can understand a process is through documentation — explicit, accurate, current documentation that captures how the work actually happens, including the exceptions, workarounds, and informal steps that are invisible in official process maps.

Most companies have some process documentation. Very few have documentation that accurately reflects current reality. The gap grows naturally over time as teams adapt, find shortcuts, and develop informal practices that never get captured formally. This gap is not a failing — it is a normal consequence of how organizations evolve.

But it becomes a critical problem when an AI tool is configured based on the documentation rather than the reality. The tool automates the documented version of the process, which may bear limited resemblance to what people actually do. The result is a tool that works correctly by its own logic but incorrectly by the logic of the people using it.

What AI needs: For the specific process you intend to augment or automate, a current, complete, step-by-step map that includes every handoff, every exception path, every manual workaround, and every "we just handle that informally" step. This map should be created by observing the work, not by reviewing existing documentation. The people who do the work should validate it before anyone shows it to a vendor.

Foundation 2: Honest Data

Every AI system's output quality is bounded by its input quality. This principle is well-understood in theory and consistently violated in practice. Not through negligence, but because the actual state of an organization's data is often significantly different from the assumed state.

Leadership typically sees data through dashboards — aggregated, cleaned, formatted for decision-making. These dashboards are useful for their purpose but they obscure the raw data quality underneath. Duplicate records, inconsistent formats, missing fields, and definitional inconsistencies are smoothed over by the visualization layer. The AI tool will not have this luxury. It works with the raw data.

What AI needs: An honest assessment of the data it will use — not the dashboarded version, but the source version. Specifically, it needs data that is deduplicated (or at least where duplicates are identified), formatted across sources, reasonably complete in the fields that matter, and defined across departments. Achieving this perfectly is unnecessary. Knowing where the gaps are is essential.

The data foundation is explored in depth, including a practical two-week audit

Foundation 3: Aligned Stakeholders

AI implementations cross organizational boundaries. They involve technology teams, operational teams, finance teams, and end users — each with different priorities, different definitions of success, and different relationships to the change being introduced.

The most dangerous condition for an AI implementation is assumed alignment — where every stakeholder believes the project is aimed at their priority because nobody has explicitly compared priorities. The CTO assumes the goal is technical sophistication. The CFO assumes the goal is cost reduction. The COO assumes the goal is minimal disruption. The vendor, sitting in the room with all three, nods at everyone.

This assumed alignment holds together during planning and procurement. It fractures during implementation, when the inevitable trade-offs between competing priorities need to be made and nobody has a previously agreed framework for making them.

What AI needs: Before the project begins, the key stakeholders must agree — explicitly, in writing — on what success looks like. Not three definitions of success. One. Specific enough that it could be measured by anyone in the organization. The discomfort of this conversation before the project starts is a fraction of the cost of having it in month four, when budgets have been spent and positions have hardened.

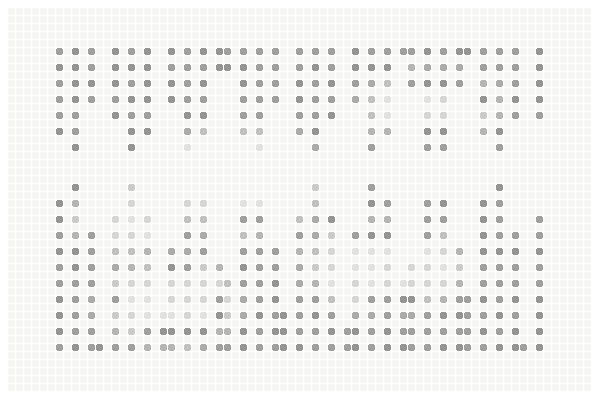

These four foundations map directly to the five-dimension readiness scoring framework

Foundation 4: Change Capacity

Every AI tool requires someone to work differently. The scope of the change varies — sometimes it is a minor interface shift, sometimes it is a fundamental restructuring of daily workflows. But the need for change is universal. And the organization's capacity to absorb change is not unlimited.

Change capacity is not about willingness. Most teams are willing to try new tools, especially when the potential benefits are clear. It is about bandwidth. Teams that are already under pressure — managing daily operations, handling escalations, meeting deadlines — have limited cognitive and temporal space for learning new systems and adapting established routines.

If the AI implementation arrives during a period of high operational pressure, even a well-designed tool with genuine value will struggle to gain adoption. Not because people resist it, but because they do not have the space to learn it properly and integrate it into their existing work patterns.

What AI needs: Honest assessment of the team's current bandwidth and a realistic plan for creating the space that adoption requires. This might mean timing the rollout for a lower-pressure period. It might mean temporarily reducing other workloads to create learning time. It might mean a phased rollout that starts with volunteers rather than the entire team. The specific approach matters less than the acknowledgment that adoption requires investment — not just of money, but of time and attention.

Does the Sequence of Preparation Matter?

These four foundations are not independent. (I almost wrote "they are deeply interconnected" but that is the kind of sentence that sounds meaningful without saying anything specific, so let me be specific.) They build on each other in a specific sequence that, when respected, makes each subsequent step easier.

Process documentation comes first because it reveals the reality of how work happens. Data assessment comes second because it reveals whether the inputs the tool needs are available and reliable. Stakeholder alignment comes third because it requires a shared understanding of what the tool is supposed to achieve — which depends on understanding the process and the data constraints. Change capacity assessment comes fourth because it determines how and when the tool can be introduced — which depends on all three previous foundations.

Companies that skip steps in this sequence or attempt them out of order encounter more friction and higher costs during implementation. Not because the sequence is sacred, but because each step produces information that makes the next step more efficient and more accurate.

The most effective way to prepare for AI is to do the work that makes AI successful before anyone talks about specific tools. Documentation before digitization. Standardization before automation. Understanding before AI. Every shortcut on this sequence costs more than the time it saves.

What Is the Most Practical First Step?

If this seems like a significant amount of preparation before even beginning to evaluate AI tools, consider the alternative. The alternative is evaluating and purchasing tools before you know whether your processes are documented, your data is ready, your stakeholders are aligned, and your team has the capacity to absorb the change. The alternative is discovering these gaps during implementation, when the cost of addressing them is multiplied by the complexity of an active project and the political difficulty of admitting that the prerequisites were not met.

Two to four weeks of honest assessment — spread across the four foundations, involving the right people, documented with the goal of clarity rather than reassurance — is the most cost-effective investment in the entire AI journey. It is not the exciting part. It is the part that makes the exciting part work.

Once the foundations are in place, the build vs. buy decision becomes clearer

A Contrarian View on Readiness

Here is something I hesitate to say because it contradicts the entire premise of readiness assessments: sometimes the best way to discover your gaps is to start a small, cheap, contained AI project and let it fail informatively.

Not every organization can afford (or has the patience for) a multi-week assessment before doing anything. For some teams, a $5,000 pilot that reveals their data is messy teaches the same lesson as a $20,000 assessment — just more painfully and more memorably. The assessment is the better path. But it is not the only path. And I would rather a company learn through a small, controlled failure than never start at all because the preparation felt overwhelming.

Start with a quick assessment. The free AI Value Diagnostic at diagnostics.vectorcxo.com evaluates your organization across these foundational dimensions in about 10 minutes. It gives you a profile-matched result that identifies which foundations need attention before your next AI investment.