The AI Readiness Assessment That Saves Everything

A five-dimension framework for assessing AI readiness before spending on tools. Covers data maturity, process clarity, and stakeholder alignment.

Most companies begin their AI journey by evaluating tools. They should begin by evaluating themselves. This is the assessment framework that consistently prevents six-figure mistakes — and it starts with questions that have nothing to do with technology.

A CFO at a 350-person manufacturing company told me something recently that I have not stopped thinking about. He said: “We spent four months implementing an AI tool before anyone asked whether we were ready for it. Turns out we were not. That question would have saved us $160,000.”

He was not angry at the vendor. The tool worked fine. He was frustrated that nobody in the room had thought to assess whether the organization could absorb what it was buying before the buying happened.

His experience is remarkably common. An AI readiness assessment is the most predictive step in the entire AI investment process, and it is the one most consistently skipped. Not because people do not care, but because the momentum of the buying process — board excitement, vendor demos, competitive pressure — makes pausing feel like an obstacle rather than a discipline.

The common diagnosis is that the wrong tool was chosen, or the vendor underdelivered, or the technology was not mature enough. Sometimes those things are true. But more often, the root cause is simpler and harder to accept: the organization was not ready for what it bought.

Readiness is not a glamorous concept. Nobody puts "we assessed our readiness" on a conference agenda. But it is the single most predictive factor in whether an AI investment delivers value or becomes an expensive experiment.

What Does AI Readiness Actually Mean?

AI readiness is not about whether your company is technologically sophisticated enough to use AI tools. Most modern AI products are designed to be accessible to companies with modest technical infrastructure. The tools are not the bottleneck.

Readiness is about whether your organization has the foundational conditions (and I use that word carefully, because "foundational" sounds like consultant-speak, but there is no better word for what I mean) that allow AI tools to deliver value once they are implemented. These conditions fall into five dimensions, each of which can be assessed independently and each of which has the power to derail an implementation on its own.

Dimension 1: Process Clarity

Before you can automate or augment a process with AI, you need to know what the process actually is. Not what the process documentation says it is — what it actually looks like when real people do real work on an ordinary day.

This distinction matters more than it might seem. In most mid-market companies, process documentation is either outdated, incomplete, or describes an idealized version of how work should flow rather than how it actually flows. The gap between documented process and actual process is where AI implementations encounter their first serious friction.

A useful test: pick the process you are most likely to want AI to improve. Ask three people who touch that process to independently describe it, step by step, including every exception, workaround, and informal handoff. If you get three meaningfully different descriptions — and you almost certainly will — you have a process clarity gap that needs to be addressed before any tool can be scoped against it.

The investment required to close this gap is usually modest: a few days of observation and documentation. The cost of not closing it is usually significant: an AI tool configured against an incomplete or inaccurate picture of the work, leading to low adoption and expensive reconfiguration.

Dimension 2: Data Maturity

Every AI tool runs on data. The quality of the output is bounded by the quality of the input. This is not a new insight — but the practical implications are underestimated during the buying process.

Data maturity is not about whether you have data. Every company does. It is about whether your data is clean enough, consistent enough, and accessible enough to be useful to an AI system without significant preprocessing.

The questions that reveal your actual data maturity are specific. Can you export a clean, structured sample of the data this tool would use — in under an hour? Does every department that touches this data define its key fields the same way? Do you know the percentage of records with missing, duplicate, or inconsistent values? Has anyone audited this data in the last twelve months?

If the answer to two or more of these is no, you are not ready for an AI tool. You are ready for a data cleanup project. That is not a failure — it is the most valuable discovery you can make before spending money on technology.

The companies that clean up their data first and buy tools second spend 40–60% less on implementation and reach production value two to three times faster. The unsexy work comes first. Always.

Dimension 3: Stakeholder Alignment

AI implementations touch multiple parts of an organization simultaneously. The technology team scopes it. Finance approves it. Operations absorbs it. End users interact with it daily. Each of these groups has a different definition of success, a different set of concerns, and a different relationship to the change being introduced.

Stakeholder alignment means these groups have agreed — explicitly, not implicitly — on what success looks like before the project begins. Not in vague terms like "improved efficiency" but in specific, measurable terms that everyone in the room could verify independently.

A practical test: get the CTO, CFO, and COO (or their equivalents) into a room and ask them to complete one sentence together: "This AI project succeeds when ___." If they cannot agree on that sentence within fifteen minutes, you have an alignment gap that will surface — expensively — during implementation.

The most common form of misalignment is not disagreement. It is the assumption of agreement that has never been tested. Everyone believes they are working toward the same goal because nobody has asked the question directly enough to discover otherwise.

Dimension 4: Change Capacity

Every AI tool requires someone to do something differently. Sometimes the change is small — a new interface, an additional step, a different data entry point. Sometimes it is significant — a fundamentally restructured workflow, a new role, a changed relationship between teams.

Change capacity is your organization's demonstrated ability to absorb these kinds of shifts without breaking what already works. The key word is "demonstrated." Not theoretical capacity. Not willingness in principle. Actual evidence from recent experience.

A useful indicator: has your organization successfully adopted a new tool or workflow in the last eighteen months? If yes, what made it work? If no — or if the last adoption was difficult, slow, or incomplete — that history is predictive of how the next one will go.

Change capacity is not fixed. It can be built. But it cannot be assumed, and it cannot be bypassed by buying a better tool. The most sophisticated AI system in the world will fail if the people who need to use it are not prepared, supported, and involved in the transition.

Dimension 5: Decision Framework

The final dimension is whether your organization has a structured way to evaluate, pilot, scale, and — critically — kill technology investments that are not working.

Most companies have a process for approving new technology. Far fewer have a process for honestly evaluating whether it is delivering value after implementation. And very few have a clear, politically safe mechanism for stopping a project that is not working before more money is spent.

The absence of a kill mechanism is one of the most expensive gaps in enterprise AI. It leads to what might be called "zombie projects" — implementations that are not working well enough to justify their cost but are not bad enough (or politically safe enough) to cancel. They persist, consuming budget and organizational attention, because nobody has the framework or the authority to pull the plug.

How Do You Score Your Readiness?

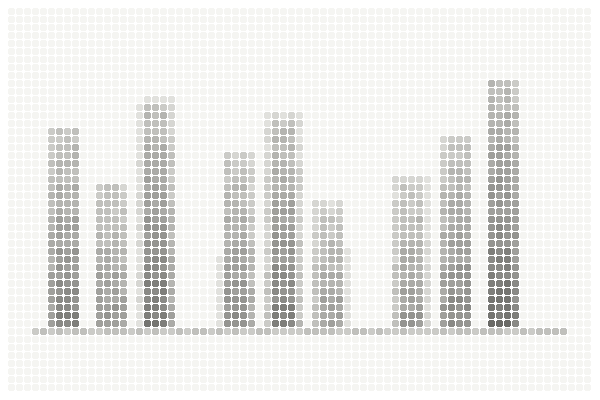

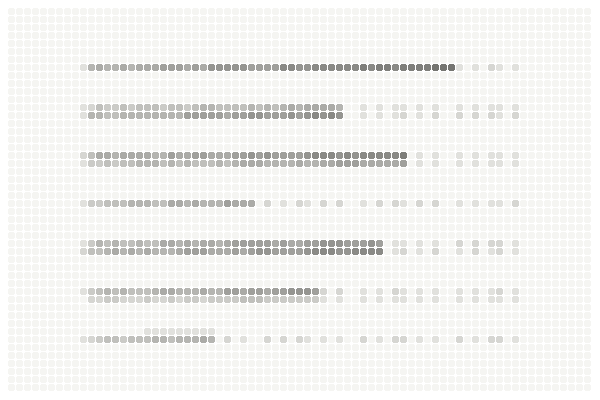

Each of these five dimensions can be assessed on a simple scale. Be ruthlessly honest — the value of this exercise is entirely dependent on the quality of the honesty applied to it.

Score each dimension from 1 to 5, where 1 represents significant gaps and 5 represents strong capability. Then total the scores.

A total of 20–25 suggests you are ready to begin evaluating specific tools and vendors for a specific use case. Your foundations are solid enough that the implementation risks are manageable.

A total of 15–19 suggests you have targeted gaps that should be addressed before committing significant budget. You may be ready for a small, contained pilot but not for a broad implementation.

A total of 10–14 suggests foundational work is needed before any tool conversation will be productive. The priority is process documentation, data cleanup, and stakeholder alignment.

Below 10 suggests starting with the basics: documenting your core processes, understanding the state of your data, and building the internal alignment that makes technology investments viable.

For the complete guide to the four organizational foundations AI requires

A practical next step. If you want a more structured version of this assessment — one that covers 18 dimensions across process clarity, data maturity, stakeholder alignment, and more — there is a free AI Value Diagnostic at diagnostics.vectorcxo.com that takes about 10 minutes and provides a profile-matched result based on where your organization actually stands.

For a detailed look at the seven structural failure modes that occur when readiness is skipped

Why Does This Assessment Get Skipped?

If readiness is so important, why do most companies skip the assessment and go straight to vendor evaluation?

Three reasons, all of them understandable.

First, momentum. Once the AI conversation starts — once the board is excited, the budget is allocated, and the vendor demos have begun — there is enormous organizational pressure to keep moving forward. Pausing to assess readiness feels like slowing down. It feels like the person suggesting it is being negative or obstructionist. In reality, it is the highest-value use of time in the entire process. But it does not feel that way in the moment.

Second, the readiness assessment surfaces uncomfortable truths. Data is messier than reported. Processes are less documented than assumed. Stakeholders are less aligned than believed. These discoveries are valuable precisely because they are uncomfortable — but nobody enjoys being the person who delivers them.

Third, nobody in the room is incentivized to suggest it. The vendor wants to sell. The internal champion wants to build. The consulting firm wants to scope. The person who says "let us pause and assess whether we are ready" is not advancing anyone's immediate interests — even though they are protecting everyone's long-term interests.

This is the structural problem at the heart of most AI investment decisions. The readiness assessment is the most valuable step and the one least likely to happen organically. It needs to be intentional.

The data dimension is explored thoroughly, including a two-week audit framework

What Happens When You Actually Do It?

The companies that invest two to four weeks in honest readiness assessment before evaluating vendors report three outcomes.

They scope more accurately. Because the assessment reveals the actual state of data, processes, and organizational readiness, the vendor conversations that follow are grounded in reality rather than assumptions. Implementation timelines and budgets are more accurate, and the surprises that typically emerge in month three are surfaced before the contract is signed.

They adopt more successfully. Because the end users are involved in the assessment process — their workflows are observed, their concerns are heard, their input shapes the requirements — the resulting implementation fits the reality of how work actually happens. Adoption resistance drops significantly when people feel their perspective was part of the decision.

They spend less overall. This seems counterintuitive — how does adding a step reduce cost? Because the assessment prevents the most expensive category of AI spending: money spent implementing tools on foundations that are not ready. The cost of a two-week assessment is a fraction of the cost of a six-month implementation that needs to be reworked because the foundation was not there.

The AI readiness assessment is not the exciting part of the journey. It is the part that makes the exciting part actually work. The companies that do it are not the ones with the biggest budgets or the most advanced technology teams. They are the ones disciplined enough to ask honest questions before committing significant resources.

That discipline is rare. It is also the most reliable predictor of AI investment success that exists.