Measuring AI ROI Without Fooling Yourself

A practical before-and-after framework for measuring whether your AI investment is actually working.

Most companies measure AI ROI using the metrics the vendor provides. Usage rates, processing speed, accuracy percentages. These are operational indicators — they tell you the tool is running. They do not answer the question the business actually cares about: "Are we better off than we were before?"

A finance director at a mid-market company in the healthcare supply chain told me she had just killed a working AI tool — not because it failed, but because when she calculated the real cost of running it, the math did not work.

The license was $4,200 a month. That was the number everyone knew. But when she traced every person who touched the tool in any way — maintaining the integration, checking the outputs, training new hires, troubleshooting issues — the total was $19,000 a month. The value the tool delivered, honestly measured, was saving about $8,000 in labor.

She was paying $19,000 to save $8,000. And nobody had noticed because the $4,200 was the only number anyone tracked.

The challenge of measuring AI ROI is not that it is technically difficult. It is that the incentive structure surrounding the measurement makes honest assessment harder than it should be.

How Do You Build an Honest Before-and-After Comparison?

The simplest and most honest approach to measuring AI ROI is a direct before-and-after comparison using the same metrics. This requires documenting the "before" state with the same rigor you will apply to measuring the "after" state — which means the measurement framework should be established before the tool is implemented, not after.

Three measurements form the foundation of the ledger. First, process duration: how long does the target process take, end to end, as measured by your own team? Not the vendor's estimate and not a theoretical calculation — an actual measurement of the time elapsed from start to finish, including waiting time, handoffs, and delays. Measure this before the tool is deployed and again after 90 days of operation.

Second, error rate: how many errors, exceptions, or rework events occur per week in the target process? Include the errors that people catch and fix quietly — these are real costs even though they do not appear in error logs. Measure before deployment and again at 90 days.

Third, time allocation: how much of the team's time is spent on this process versus other work? Not in percentages — in actual hours per week. This reveals whether the tool has genuinely freed up capacity or simply shifted the work from one type of task to another.

The difference between the before and after measurements across these three dimensions is your real ROI narrative. Not a projected number. Not a vendor dashboard. An actual comparison using metrics you defined and measured yourself.

What Hidden Costs Should You Be Tracking?

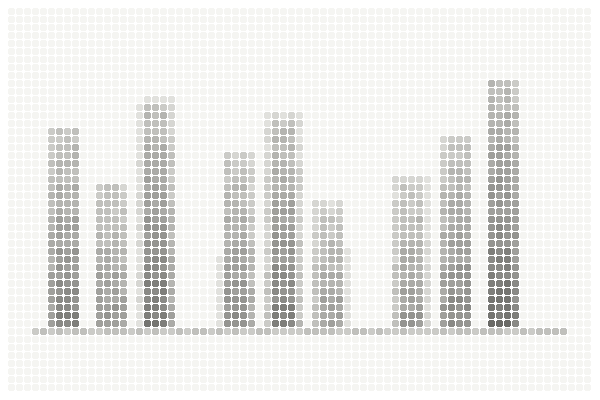

The before-and-after ledger captures the value side of the equation. The hidden cost inventory captures the expense side — specifically, the costs that do not appear on the vendor invoice but are very real to the organization.

Integration maintenance is the most common hidden cost. Someone on the IT team is spending time keeping the connection between the AI tool and your existing systems running. API updates, data format changes, connection monitoring, troubleshooting failures — this work is real and recurring. Estimate the hours per month and multiply by the team member's fully loaded cost.

Output verification is the second most common hidden cost. In many AI implementations, someone is informally assigned to "check the AI's work" before outputs go to clients, customers, or downstream processes. This checking takes time — sometimes significant time — and it often goes untracked because it is absorbed into someone's existing role rather than scoped as a distinct task.

Training and onboarding adds ongoing cost that extends beyond the initial implementation. Every new hire who will use the tool needs to be trained. If the tool is complex enough that training takes more than a few hours, this cost accumulates meaningfully over time as the team evolves.

Support and troubleshooting captures the time spent when the tool does not work as expected. Internal help desk tickets, vendor support interactions, time spent diagnosing issues — all of this is real cost that is rarely budgeted for but consistently incurred.

The total of these hidden costs, added to the license fee, gives you the true monthly cost of the AI tool. Compare this total against the value measured in the before-and-after ledger. If the total cost exceeds the value, the tool is not delivering positive ROI — regardless of what the vendor dashboard shows.

The hidden cost inventory is part of a larger total cost of ownership framework

Why Should You Wait 90 Days to Measure?

The temptation to measure early is understandable. Leadership wants to know if the investment is working. The vendor wants to demonstrate value quickly. The project team wants validation.

But measuring at 30 days consistently produces misleading results. The first month after deployment is dominated by learning-curve effects, configuration adjustments, and the novelty factor. Usage may be high because the tool is new and people are exploring it. Alternatively, usage may be low because people are still learning and have not yet integrated the tool into their routine.

Neither pattern is indicative of long-term value. The learning curve flattens. The novelty wears off. Configuration adjustments settle. By 90 days, you are seeing the steady-state reality — how the tool performs when it is no longer new, when the initial excitement (or frustration) has passed, and when people are either using it as part of their routine or not.

Patience in measurement is as important as rigor in measurement. A 30-day assessment that triggers premature celebration or premature cancellation is more expensive than waiting for the data to stabilize.

The most honest AI ROI measurement is also the simplest: find the person who uses the tool daily and ask them, "If this tool disappeared tomorrow, what would you do differently?" Their answer tells you more about the tool's real value than any dashboard ever will.

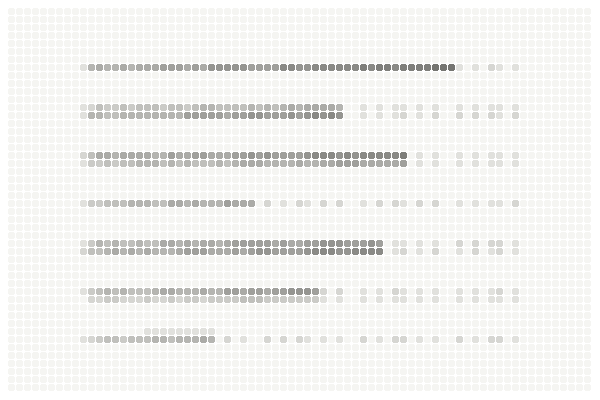

When ROI is disappointing, adoption failure is often the underlying cause

What Is the Simplest Test of AI Value?

Beyond the quantitative measurement, there is a qualitative test that cuts through every metric, every dashboard, and every internal narrative to the question that actually matters: would anyone miss this tool if it disappeared?

Ask the primary users of each AI tool one question: "If this tool stopped working tomorrow, what would you do?" The responses fall into clear categories.

"We would be in serious trouble" — the tool has become genuinely embedded in the work. It is delivering real value and its absence would create a meaningful operational gap. This is the strongest possible validation.

"We would go back to the old way — it would take longer but we would manage" — the tool provides convenience but not transformation. It is delivering some value, but the value may not justify the total cost. Worth continuing but worth scrutinizing.

"Honestly, I am not sure anyone would notice" — the tool is running but not contributing. It is consuming budget without delivering commensurate value. This is the signal to either significantly redesign the implementation or discontinue it.

The disappearance test is not a replacement for quantitative measurement. It is a complement — a reality check that reveals whether the numbers on the dashboard translate to value that people actually experience in their work.

How Should You Share the Results?

The final and often overlooked step in AI ROI measurement is sharing the results transparently with the organization. Not just the leadership team — the teams that use the tool, the teams that maintain it, and the teams whose budgets fund it.

Transparent sharing serves multiple purposes. It builds trust — people respect honest assessment, even when the numbers are mixed. It surfaces information that top-down measurement misses — users who see the numbers may volunteer context that changes the interpretation. And it creates accountability — when the measurement framework is visible, the incentive to manipulate the metrics decreases because the consequences of dishonesty are more visible.

The most effective approach is a simple quarterly report that presents the before-and-after ledger, the hidden cost inventory, and the net value calculation without spin. If the numbers are positive, celebrate and look for ways to extend the value. If the numbers are mixed, investigate and adjust. If the numbers are negative, make the hard decision early rather than letting a losing investment consume more resources.

Honest measurement starts during the pilot phase, not after full deployment

When You Should Stop Measuring

This might seem like an odd thing to include in an article about measurement, but I think it needs to be said: there is a point where continued measurement becomes a form of avoidance.

If after 90 days the tool is clearly delivering value — the users depend on it, the before-and-after numbers are unambiguously positive, the team would be disrupted without it — stop measuring and start optimizing. I have seen companies spend more time measuring the ROI of a working tool than they spent evaluating it in the first place. Measurement is a means, not an end. Once the answer is clear, act on it.

Assessing readiness for measurement. Honest ROI measurement starts with clear foundations — you need to know what you are measuring and why before the tool is deployed. If you are still in the early stages of evaluating whether AI makes sense for your organization, the free AI Value Diagnostic at diagnostics.vectorcxo.com can help you assess your starting position.