When Your AI Tool Is Not Being Used

Why AI tools get purchased and not used — and a 60-day framework for diagnosing and fixing adoption failure.

The tool works. The vendor confirmed it. The accuracy is where it should be. The dashboard shows it is operational. And almost nobody is using it. This is the quiet epidemic of enterprise AI — tools that were purchased, deployed, and abandoned. Understanding why it happens is the first step toward fixing it.

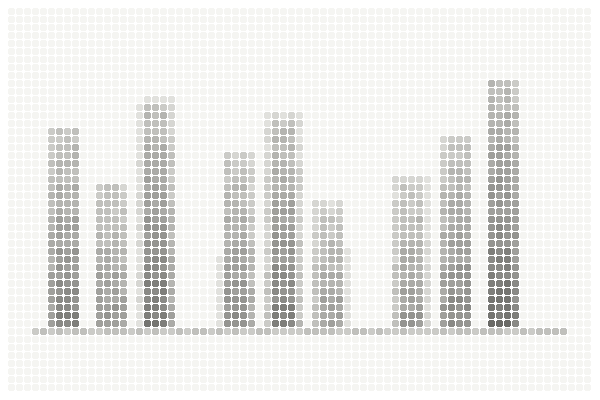

AI adoption failure does not announce itself dramatically. There is no system crash, no error message, no vendor notification. It happens gradually. Usage peaks in the first two weeks after launch — driven by training sessions, novelty, and managerial attention. Then it declines. After three months, a handful of people are using the tool regularly. After six months, the license is renewing but the value is not.

This pattern is remarkably common and remarkably consistent across industries, company sizes, and tool types. It is so common that it has earned an industry term — shelfware — though the term understates the seriousness of the problem. Shelfware is not just wasted license fees. It is wasted implementation effort, wasted organizational attention, and — most damagingly — eroded confidence in the organization's ability to adopt new technology.

Understanding why adoption fails is the prerequisite to fixing it. And honestly, the causes are less mysterious than most teams assume. And the causes are almost never what the surface narrative suggests.

What Are the Real Causes of Low Adoption?

When asked why they stopped using an AI tool, people typically give practical reasons: "It was too slow," "The output was not reliable," "I did not have time to learn it properly." These explanations are accurate descriptions of symptoms but they rarely capture the structural cause underneath.

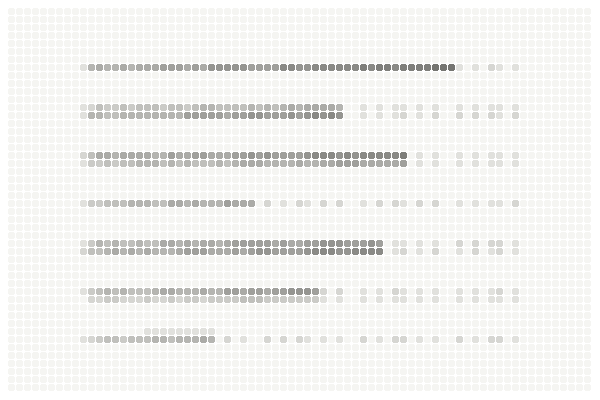

The first structural cause is workflow misalignment. The tool was designed for a version of the workflow that does not match how people actually work. This happens when the tool is scoped based on process documentation rather than process observation. The gap between documented and actual workflow might be small — an extra step here, a different sequence there — but small gaps compound into significant friction when a tool requires precise alignment with the workflow to be useful.

The fix for workflow misalignment is not training people to change their workflow. It is adjusting the tool to match the actual workflow. This often requires configuration changes, integration modifications, or — in some cases — acknowledging that the tool was scoped against the wrong process map and needs to be reconfigured from that starting point.

The second structural cause is net-negative convenience. The tool was supposed to make work easier. But when total effort is calculated — learning the new interface, entering data in a new format, checking the AI output before trusting it, dealing with exceptions the tool cannot handle — the total effort exceeds the effort of the old method. People are rational. If the new way is harder than the old way, they revert to the old way.

The fix for net-negative convenience requires honest measurement of total effort, not just the effort the tool eliminates. The question is not "does this tool save time on task X?" but "does this tool save more time on task X than it adds on tasks Y and Z?" If the answer is no, the implementation needs to be redesigned to reduce the added burden — through better integration, simpler interfaces, or automated handling of the exceptions that currently require manual intervention.

The third structural cause is trust deficit. The tool produces outputs that are correct most of the time but wrong often enough that users feel they need to verify every output manually. This verification takes time and, more importantly, undermines the core value proposition: if you need to check every output, the tool is not saving time — it is adding a step.

The fix for trust deficit is transparency. Users need to understand when and why the tool might be wrong, what the confidence level of each output is, and which outputs require verification versus which can be trusted. Many AI tools have this capability but it is not always surfaced in the user interface. Working with the vendor to make confidence indicators visible and actionable can significantly reduce unnecessary verification and rebuild trust over time.

The fourth structural cause is absent feedback loops. Users encounter problems, develop workarounds, and move on. Nobody collects this information. Nobody acts on it. The problems persist, the workarounds become permanent, and the gap between the tool's potential and its actual usage widens over time.

The fix for absent feedback loops is structural: designate a specific person to collect user feedback weekly for the first 90 days, prioritize the most common issues, and work with the vendor or internal team to resolve them. The feedback loop does not need to be elaborate. It needs to exist and it needs to produce visible action.

The fifth structural cause is champion departure. Many AI implementations depend on one or two internal advocates who drive the project forward, troubleshoot problems, and motivate the team through the adoption curve. When these champions change roles, leave the company, or simply move their attention to other priorities, the project loses its organizational energy. Without sustained advocacy, the tool drifts toward disuse.

The fix for champion departure is institutional rather than individual: embed the tool into team processes, performance metrics, and standard operating procedures so that its use is not dependent on any single person's advocacy. The tool should be part of how the team works, not a side project that one person maintains.

How Do You Recover Adoption in 60 Days?

If you have an AI tool with declining adoption, a structured recovery is possible. It requires honesty about what went wrong and willingness to invest the time that was not invested during the initial rollout.

The first two weeks should be spent on diagnosis. Not by reviewing vendor reports or usage dashboards — by sitting with the people who stopped using the tool and asking them why. Not in a group meeting where social pressure shapes the answers. Individually. Privately. With genuine curiosity rather than defensiveness. The goal is to identify which of the five structural causes is primary.

The next two weeks should be spent on targeted fixes. Based on the diagnosis, make the specific changes that address the primary cause. Reconfigure the workflow alignment. Reduce the steps that create net-negative convenience. Surface confidence indicators. Build a feedback loop. Assign a new champion. Do not try to fix everything at once — address the primary cause first.

The final four weeks should be spent on guided re-adoption. Not a relaunch announcement. Not mandatory training. A smaller, more honest process: work with a small group of willing users, implement the changes, validate that the fixes address the actual problems, and then expand gradually based on demonstrated improvement.

Adoption failure is almost never about the tool's capability. It is about the gap between the tool's design assumptions and the organization's reality. Closing that gap — through observation, honest diagnosis, and targeted adjustment — is how adoption is built. Not forced. Built.

The disappearance test is part of a broader honest ROI measurement framework

How Do You Spot Adoption Failure Before It Becomes Obvious?

Adoption failure is easier to prevent than to reverse. But prevention requires recognizing the early warning signs — the indicators that appear in weeks two through six, before the usage numbers have fully declined but after the initial training honeymoon has ended.

The first warning sign is workaround proliferation. When users start building spreadsheets, manual processes, or informal systems that duplicate what the AI tool is supposed to do, they are telling you — through their behavior rather than their words — that the tool does not fit their workflow. This is not resistance. It is pragmatism. And it is the earliest and most reliable signal that something needs to change.

The second warning sign is selective usage. The tool is being used for some tasks but avoided for others, even when it was designed to handle both. This usually indicates that the tool works well for straightforward cases but fails on the exceptions and edge cases that represent a significant portion of real work. The users have learned which scenarios the tool handles well and are routing only those through it.

The third warning sign is champion dependency. If adoption is strong when the internal champion is present and drops when they are unavailable — on vacation, in meetings, working on other priorities — the tool has not been institutionalized. It is running on personal energy rather than organizational integration. This is sustainable for weeks. It is not sustainable for months.

The fourth warning sign is training requests that stop. In the early weeks, users ask questions about the tool — how to handle specific scenarios, what to do when it produces unexpected output, how to interpret certain results. When these questions stop, it can mean the team has reached proficiency. It can also mean they have stopped trying. The difference is visible in the usage data: if questions stop and usage is stable, proficiency has been reached. If questions stop and usage is declining, they have given up.

Each of these signs, caught early, can be addressed with targeted intervention. Caught late — after usage has fully declined and the organizational narrative has shifted to “that AI thing did not work” — recovery becomes significantly harder and more expensive.

Prevention is more effective than recovery. Most adoption failures can be prevented by assessing organizational readiness before the tool is purchased. The free AI Value Diagnostic at diagnostics.vectorcxo.com evaluates the readiness dimensions — process clarity, stakeholder alignment, change capacity — that most directly predict adoption success.