The AI Vendor Evaluation Checklist

A printable 20-point scorecard for evaluating AI vendors. Score demos objectively and compare vendors side by side.

This is not an article about how to think about AI vendor evaluation. There are plenty of those. This is the actual checklist — the one you print, bring to the demo, and score in real time. The thinking behind each item comes after; use it if you want the context, skip it if you just need the tool.

A CTO at a mid-market financial services firm told me she had sat through eleven AI vendor demos in three months. By the end, they all blurred together. Every demo was impressive. Every vendor was confident. And she had no structured way to compare what she had seen.

So we built her a scorecard. Twenty items across five categories, each scored 1 to 5. She printed five copies — one for each person in the evaluation room — and they scored independently during the next demo.

The results were revealing. Not because the vendor scored poorly, but because the five evaluators scored the same demo differently. The CTO gave integration honesty a 4; her IT lead gave it a 2. That gap — invisible until they compared scores — surfaced a concern that would have become a six-figure problem during implementation.

Here is the checklist. Print it. Use it. The disagreements between your evaluators are where the real insights live.

The Checklist

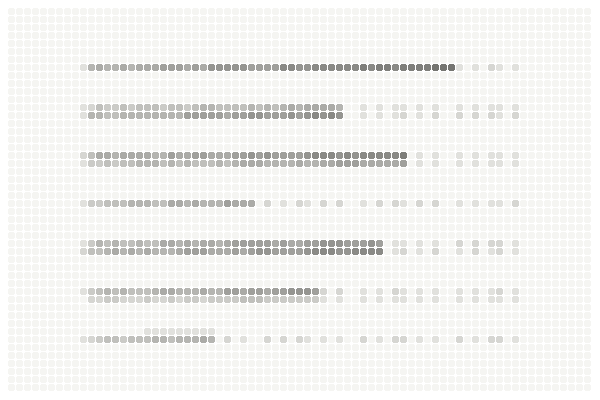

Category 1: Data Reality (score each 1–5)

Demo used our actual data, not the vendor's curated dataset

Vendor demonstrated what happens when input data is messy or incomplete

Data preprocessing requirements were stated explicitly (not assumed)

Vendor acknowledged the gap between demo data quality and our data quality

Category 2: Integration Honesty (score each 1–5)

Vendor stated their assumptions about our existing systems explicitly

Vendor offered (or agreed to) a pre-scope site visit to see our actual environment

Integration timeline accounts for middleware, data mapping, and testing

Vendor shared a specific example of a difficult integration and how it was resolved

Category 3: Implementation Reality (score each 1–5)

Vendor discussed at least one failed or difficult implementation honestly

Specific workflow changes for our end users were identified and discussed

Timeline includes data cleanup, training, change management — not just technical deployment

Vendor explained what ongoing support looks like after go-live

Category 4: Performance Accountability (score each 1–5)

Vendor differentiated between demo performance and expected real-world performance

Error handling and escalation paths were demonstrated, not just mentioned

Vendor agreed to a parallel proof of concept on our data before contract

SLA or performance benchmarks are based on POC results, not demo numbers

Category 5: Long-Term Viability (score each 1–5)

Ongoing maintenance responsibilities (ours vs. theirs) were clearly defined

Data ownership and portability after contract ends were addressed

Vendor provided a reference company of similar size, industry, and recency (last 12 months)

Total cost of ownership — not just license — was discussed proactively

How to Score

But here is the part that matters more than the total score: compare the individual scores from each evaluator. Where two people in the same room scored the same item differently by 2 or more points, you have found a gap in shared understanding that needs a conversation before anyone signs anything.

Forward to your team

Before our next vendor demo, print one copy of this checklist for each person in the room. Score independently during the demo. Compare scores after. The items where we disagree by 2+ points are the ones we need to discuss before making a decision. Takes 5 minutes to compare; could save us months of misalignment.

Why This Structure Works

Most vendor evaluations fail not because the team picks the wrong vendor, but because different people in the room are evaluating different things. The CTO watches the technical architecture. The CFO listens for pricing signals. The operations lead worries about disruption. Each person leaves the demo with a different impression, and the impressions are never reconciled because there is no shared framework to reconcile them against.

This checklist forces the reconciliation. Not through discussion (which tends to defer to the most senior person in the room) but through independent scoring that makes disagreements visible and specific.

I learned this the hard way. Early in my consulting work, I facilitated vendor evaluations by asking the team to discuss each demo after the fact. The discussions were always dominated by whoever spoke first or spoke loudest. The quieter evaluators — often the ones with the most operational insight — deferred. Bad signals got buried under social dynamics.

Independent scoring changes that. When the IT lead's score of 2 sits next to the CTO's score of 4 on the same item, neither person can ignore the gap. The conversation that follows is specific, bounded, and productive in a way that open-ended post-demo discussions rarely are.

What This Checklist Cannot Do

I want to be honest about the limits here. This checklist evaluates vendors. It does not evaluate whether your organization is ready to buy from any vendor at all. If your data is not clean, your processes are not documented, or your stakeholders are not aligned on what success looks like, even the highest-scoring vendor will struggle to deliver value.

The vendor evaluation is the middle step in a three-step process. The first step is readiness assessment (are we ready to buy?). The second is vendor evaluation (who should we buy from?). The third is pilot design (does the tool actually work in our environment?). Skipping the first step and jumping to the second is the most common (and the most expensive) sequencing mistake in enterprise AI.

For the readiness assessment that should precede any vendor conversation

For designing an honest pilot after selecting a vendor

The best vendor evaluation is not the one that picks the best vendor. It is the one that makes every evaluator's perspective visible, reconciles the disagreements before the decision, and ensures the team is buying with shared understanding rather than assumed agreement.

Not sure if you are ready for vendor conversations? The free AI Value Diagnostic at diagnostics.vectorcxo.com takes about 10 minutes and tells you whether your foundations — data, process, alignment — are strong enough to make a vendor evaluation productive. Better to know before the demo than after the contract.

Print the checklist. Score independently. Compare. The tool is free; the conversations it produces are worth more than most consulting engagements I have seen.